Summary, MLPerf™ Inference v2.1 with NVIDIA GPU-Based Benchmarks on Dell PowerEdge Servers

Por um escritor misterioso

Descrição

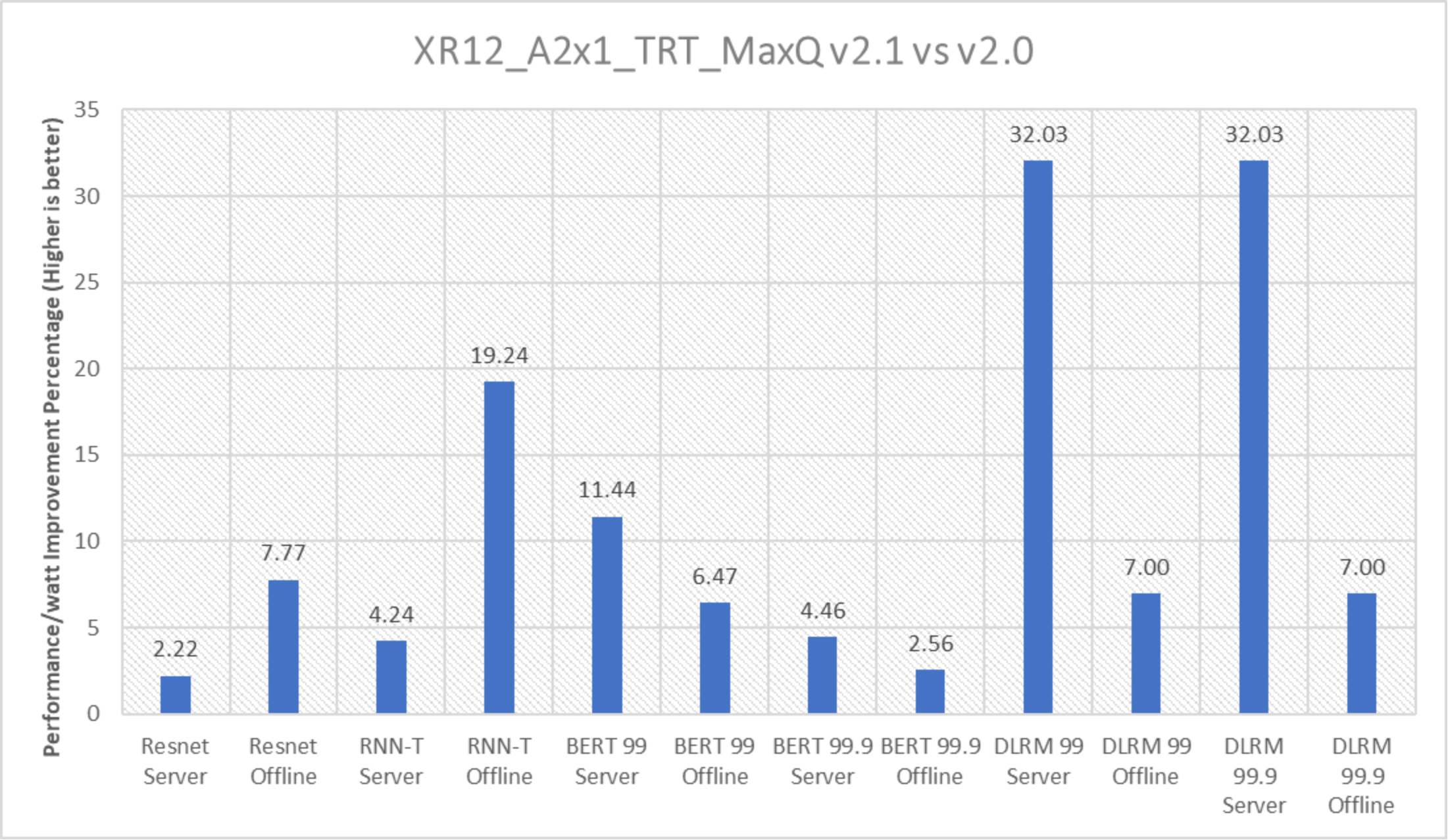

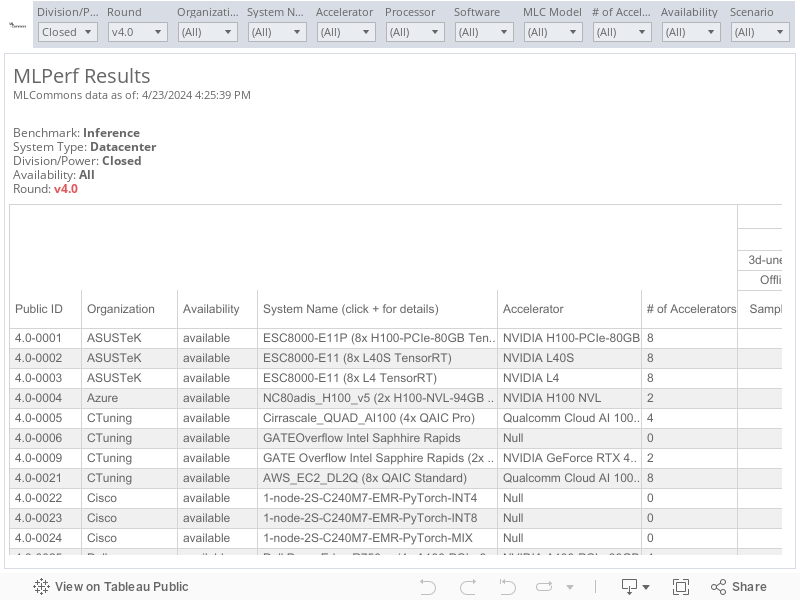

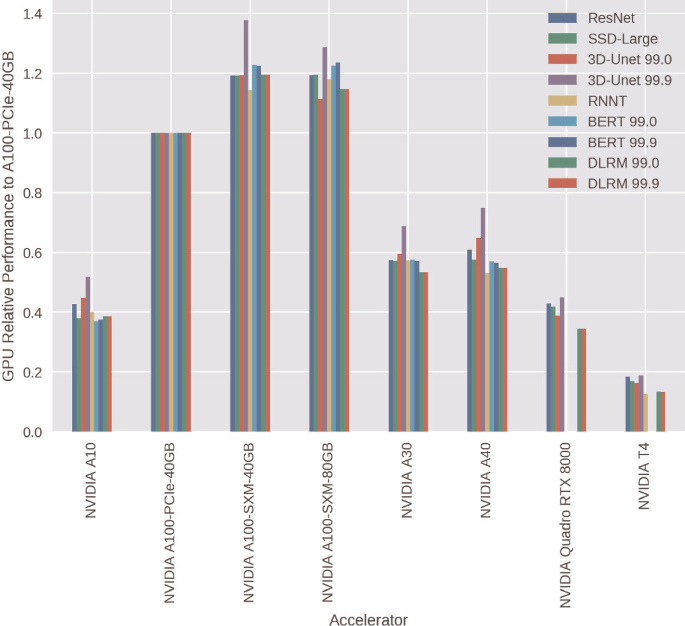

This white paper describes the successful submission, which is the sixth round of submissions to MLPerf Inference v2.1 by Dell Technologies. It provides an overview and highlights the performance of different servers that were in submission.

Summary MLPerf™ Inference v2.1 with NVIDIA GPU-Based Benchmarks

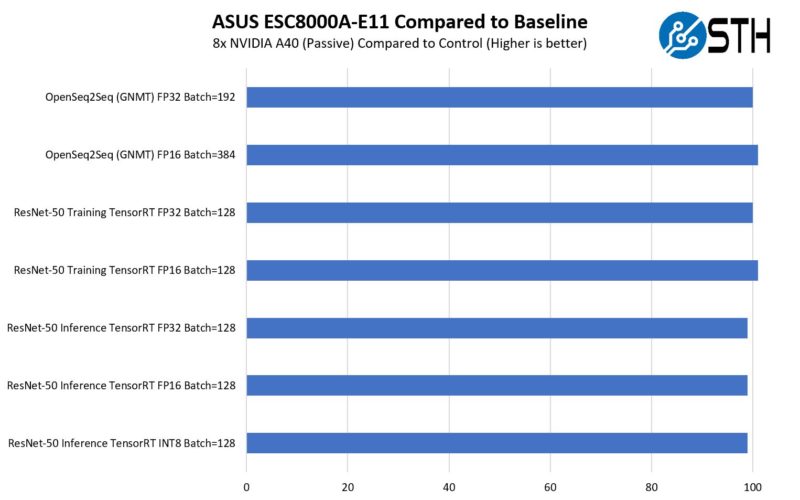

Benchmark MLPerf Inference: Datacenter

Everyone is a Winner: Interpreting MLPerf Inference Benchmark

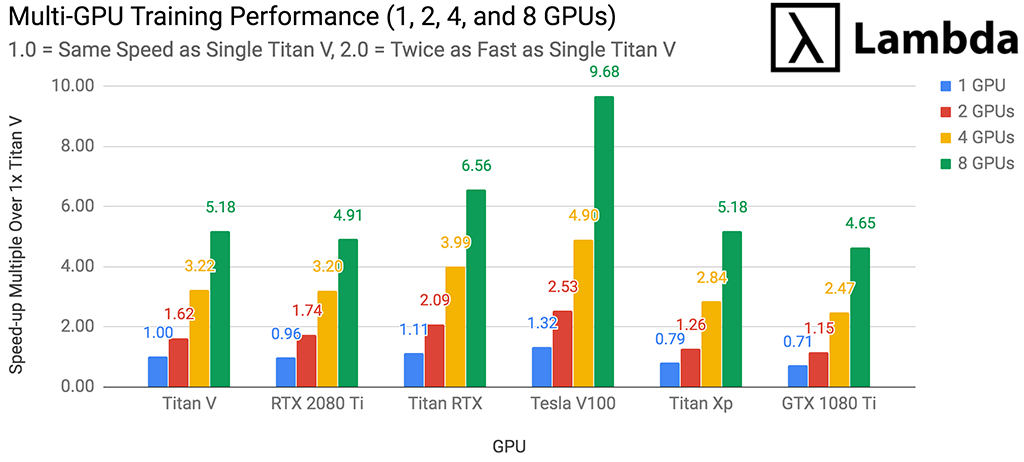

GPU Server for AI - NVIDIA H100 or A100

MLPerf Inference: Startups Beat Nvidia on Power Efficiency

G593-SD0 (rev. AAX1) GPU Servers - GIGABYTE Japan

MLPerf™ Inference v2.1 with NVIDIA GPU-Based Benchmarks on Dell

.jpg)

NVIDIA A100 40G GPU

MLPerf Inference Virtualization in VMware vSphere Using NVIDIA

de

por adulto (o preço varia de acordo com o tamanho do grupo)

:max_bytes(150000):strip_icc()/GPUBenchmarking01-e4874c6c264d41018e68885badb39ed3.jpg)