Policy or Value ? Loss Function and Playing Strength in AlphaZero

Por um escritor misterioso

Descrição

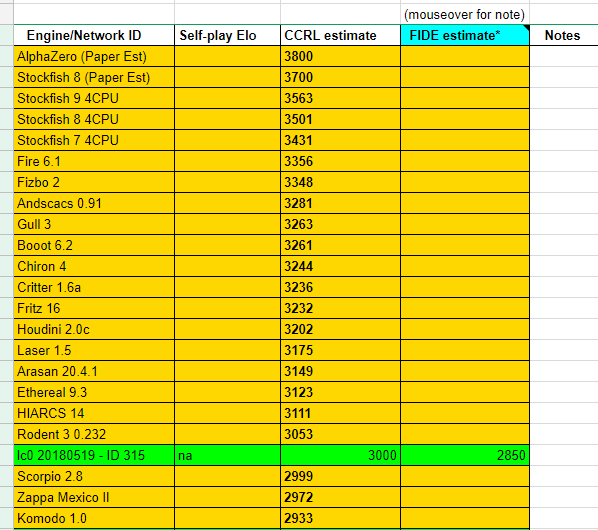

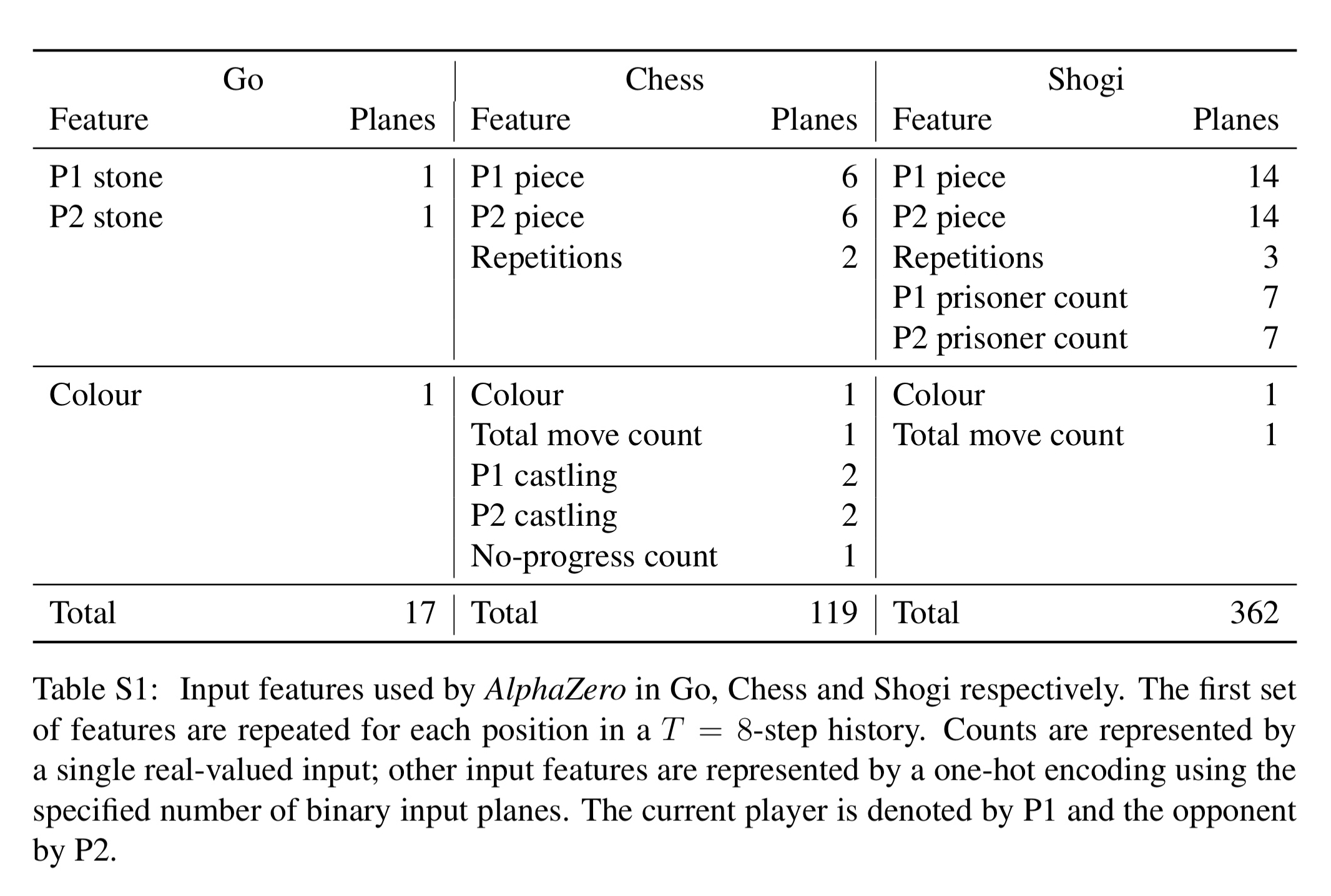

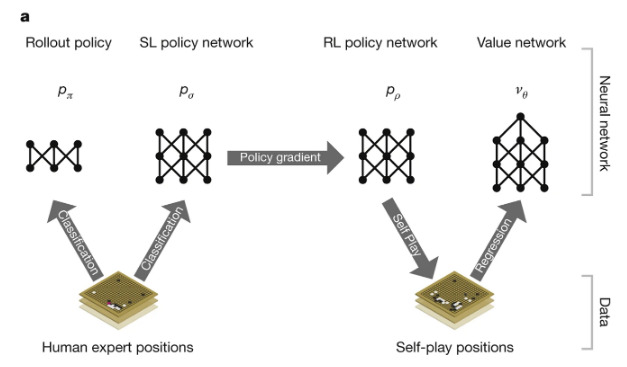

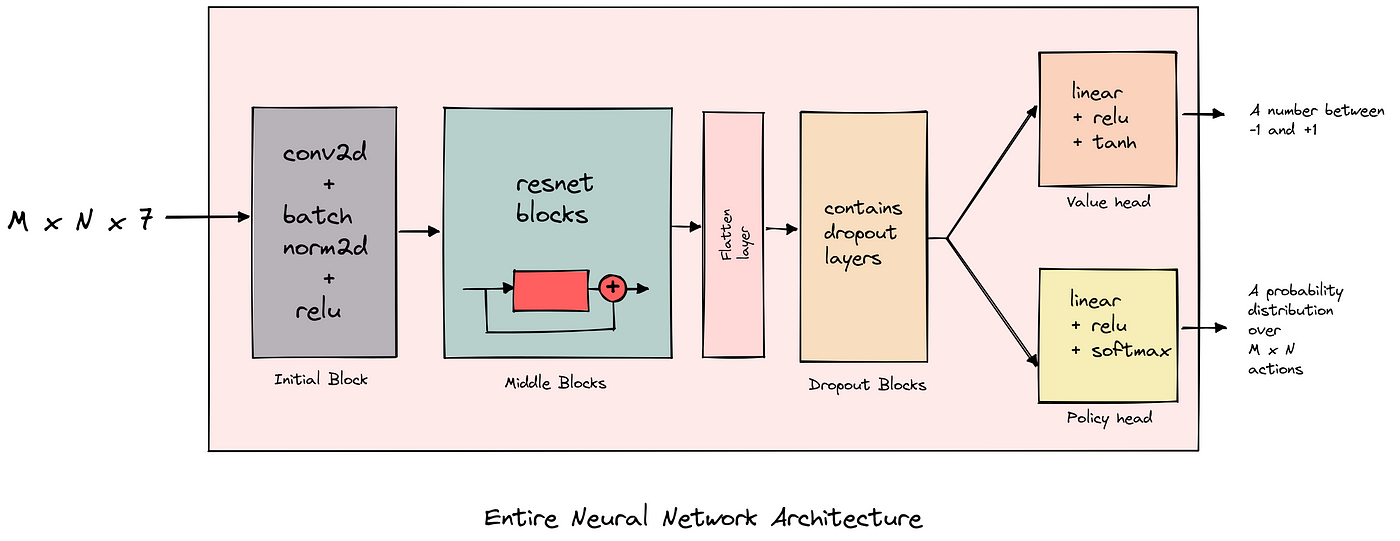

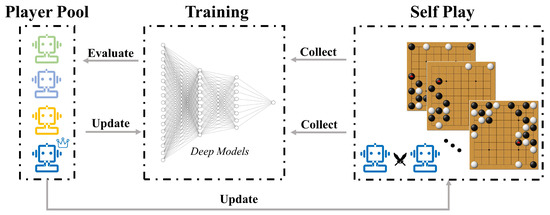

Results indicate that, at least for relatively simple games such as 6x6 Othello and Connect Four, optimizing the sum, as AlphaZero does, performs consistently worse than other objectives, in particular by optimizing only the value loss. Recently, AlphaZero has achieved outstanding performance in playing Go, Chess, and Shogi. Players in AlphaZero consist of a combination of Monte Carlo Tree Search and a Deep Q-network, that is trained using self-play. The unified Deep Q-network has a policy-head and a value-head. In AlphaZero, during training, the optimization minimizes the sum of the policy loss and the value loss. However, it is not clear if and under which circumstances other formulations of the objective function are better. Therefore, in this paper, we perform experiments with combinations of these two optimization targets. Self-play is a computationally intensive method. By using small games, we are able to perform multiple test cases. We use a light-weight open source reimplementation of AlphaZero on two different games. We investigate optimizing the two targets independently, and also try different combinations (sum and product). Our results indicate that, at least for relatively simple games such as 6x6 Othello and Connect Four, optimizing the sum, as AlphaZero does, performs consistently worse than other objectives, in particular by optimizing only the value loss. Moreover, we find that care must be taken in computing the playing strength. Tournament Elo ratings differ from training Elo ratings—training Elo ratings, though cheap to compute and frequently reported, can be misleading and may lead to bias. It is currently not clear how these results transfer to more complex games and if there is a phase transition between our setting and the AlphaZero application to Go where the sum is seemingly the better choice.

Policy and value heads are from AlphaGo Zero, not Alpha Zero · Issue #47 · glinscott/leela-chess · GitHub

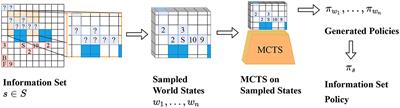

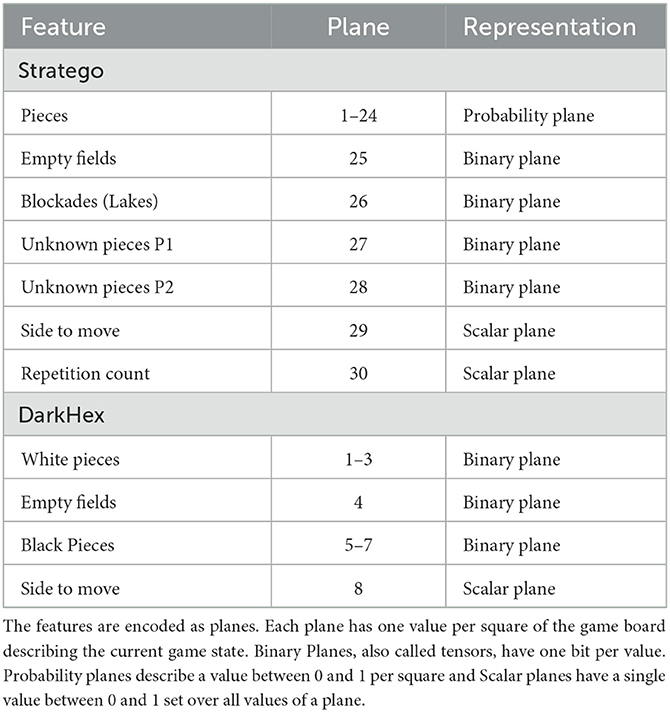

AlphaZe∗∗: AlphaZero-like baselines for imperfect information games are surprisingly strong - Frontiers

Does the neural net of AlphaZero only evaluate the score of a given chess position or does it do something else? - Quora

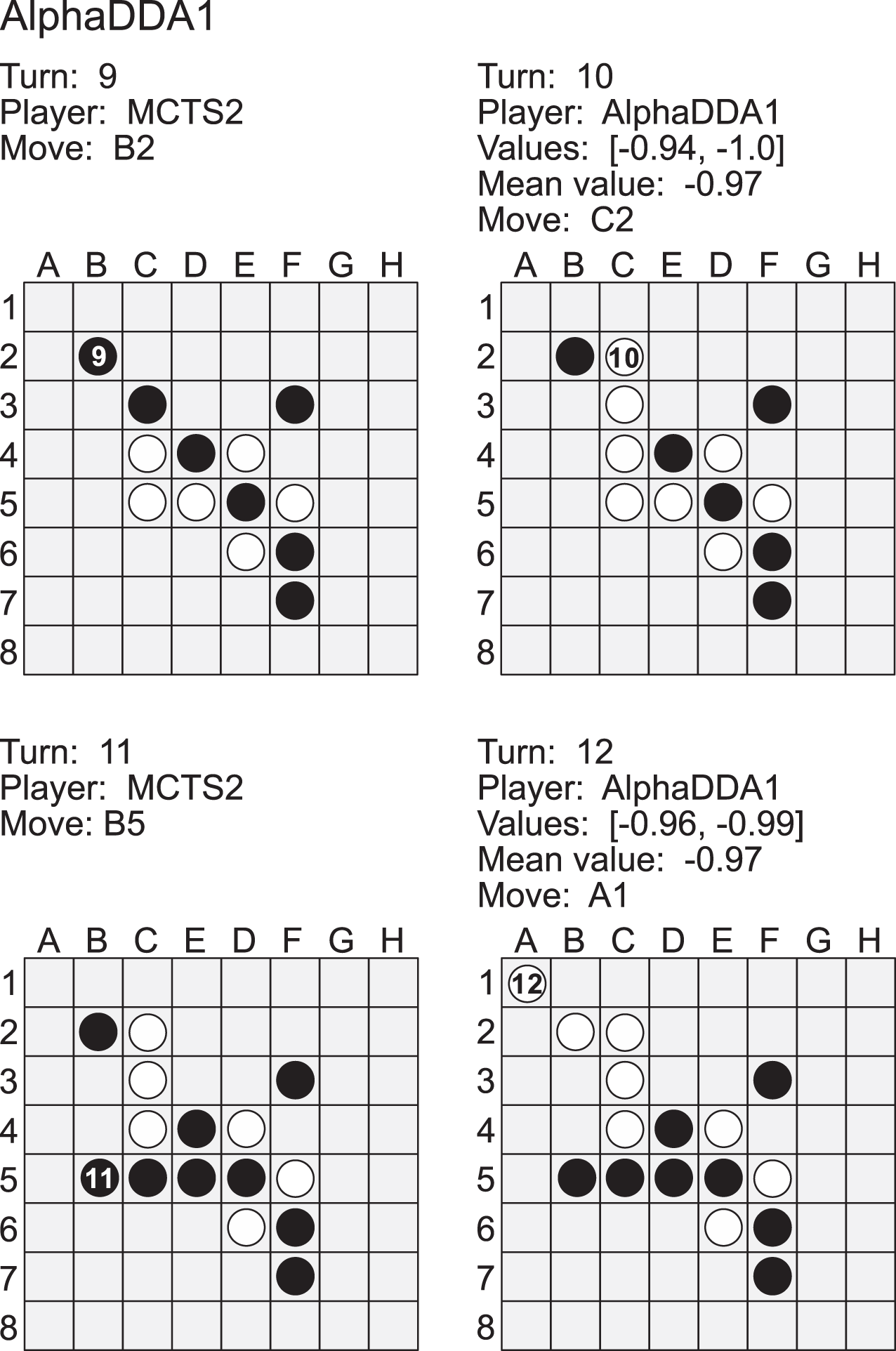

AlphaDDA: strategies for adjusting the playing strength of a fully trained AlphaZero system to a suitable human training partner [PeerJ]

A general reinforcement learning algorithm that masters chess, shogi, and Go through self-play

AlphaGo/AlphaGoZero/AlphaZero/MuZero: Mastering games using progressively fewer priors

AlphaZero from scratch in PyTorch for the game of Chain Reaction — Part 3, by Bentou

Frontiers AlphaZe∗∗: AlphaZero-like baselines for imperfect information games are surprisingly strong

Policy or Value ? Loss Function and Playing Strength in AlphaZero-like Self- play

Electronics, Free Full-Text

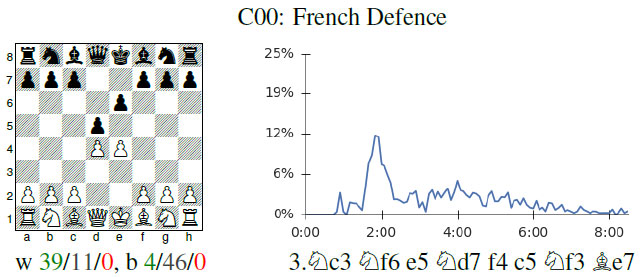

The future is here – AlphaZero learns chess

de

por adulto (o preço varia de acordo com o tamanho do grupo)